......

CLICKS, BEEPS, BUZZES,

The disappearing sounds of computer activity

Auditory cues play an important role in our lives. They warn us, inform us, help us navigate and understand the world around us. Many of these sonic cues are produced by mechanical activity from everyday technology such as tools, appliances and means of transportation. These sounds make us aware of processes that are operating beyond our sight. Yet, one of the most dominating and increasingly omnipresent processes on which we heavily depend is becoming silent: computation.

by Mark van den Heuvel

Although computer activity used to make all kinds of sounds, technological developments are about to make them obsolete. What is currently left of this are a few mechanical sounds and some Audio Skeuomorphs.Audio skeumorphs describe the emulation of sounds that were once inherent in the original device.

However, there seems to be limited discourse about what might be the impact of this loss. How will this silence affect the Human-Computer relationship as this disappearance of operational sounds obfuscate the nature and origin of our computational devices? In this thesis I will unpack how this growing silence contributes to the further detachment of computation from the material world. Additionally, I argue how it affects the critical engagement of the next generation of users for whom silence is the unquestioned standard.

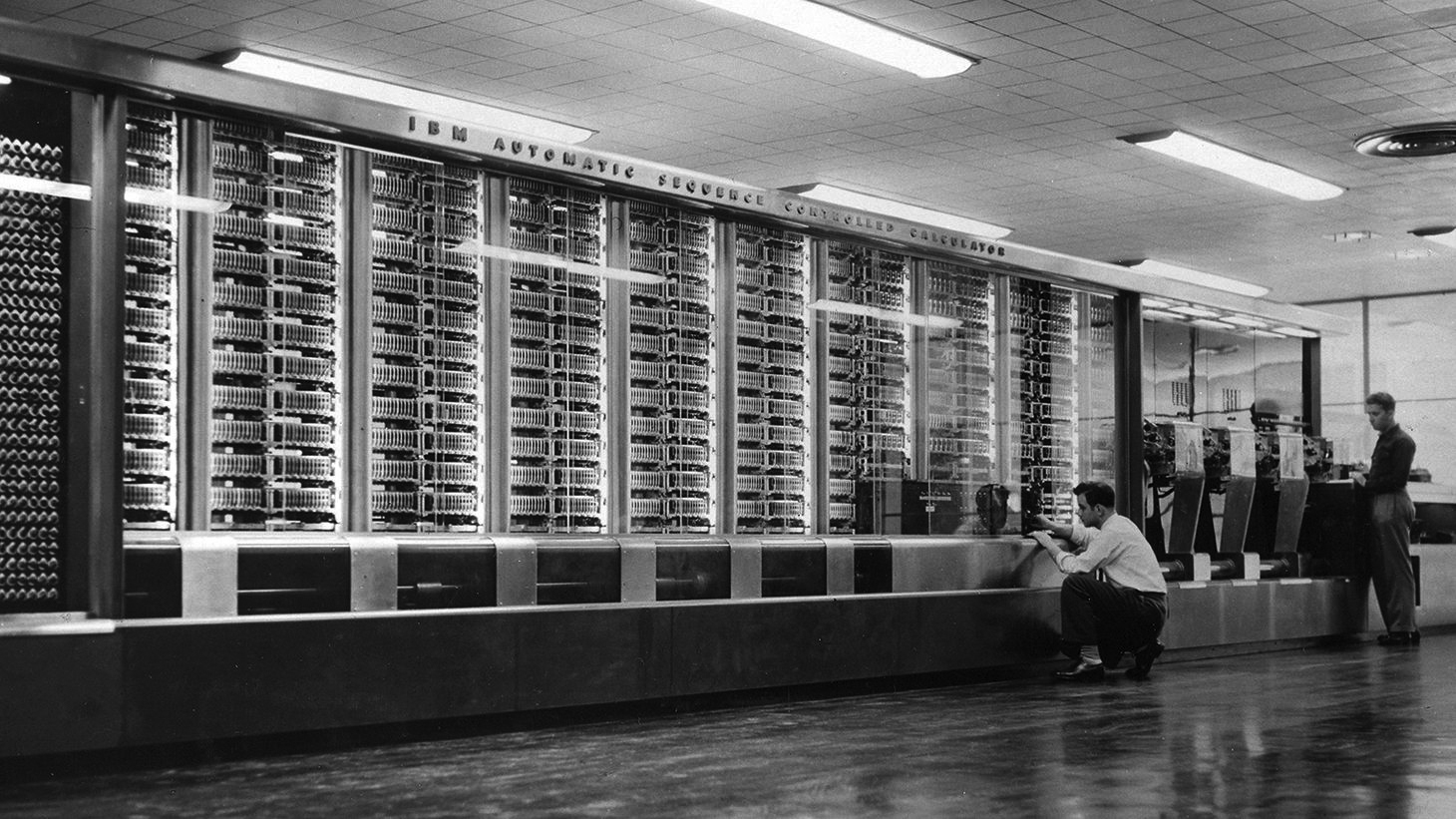

The ENIAC (1943) was one of the first programmable, electronic, digital general-purpose computers. It was specifically designed for US warfare and consisted of 19.000 vacuum tubes which produced a lot of noise.In the early days of computing, operators were able to monitor processes and computer activity through sound. Originating from sonification of the system's mechanics and components, these audible cues functioned as a valuable source of information for detecting errors in calculation routines. When computers became more silent, operators regretted this loss. This eventually led to the reintroduction of sound, in order to restore the extra layer of sensory information to provide users with audible system feedback. These cues later became known as User Interface Sounds.

Other processes of computer activity also made sound. The clattering sound of loading data from punch cards, the spinning sounds of loading software from media such as a floppy disk and magnetic tape, the buzzing sound of the CPU, cooling mechanisms, the screeching sound of networking via dial-up modems, to name a few.

Today, these sounds have almost disappeared. These ever so noisy processes are replaced by silent successors: storing data in the cloud, networking over Wi-Fi or Bluetooth, CPU activity. The sound of the fan from our aging laptops might be one of the last sonic traces that connects us to the material world of computer activity. Now we are at the tipping point of entering complete silence, we may wonder if we should care?

(Spoiler: The answer is yes.)

Bodily Sonification

I hear the sound of boiling water swelling in the background. As it gets increasingly louder, it is suddenly interrupted by a click. This notification tells me the water is ready to make some tea. Very convenient. I already forgot I had turned it on.

I am currently working in my studio. It is quiet. In the background, the radiator is clicking softly. "Don't forget to turn it off when you leave", I think to myself. I hear a train entering the station which is close by. It is a low comforting cinematic sound pattern. I know that within a minute, it reaches the station close by and people will get in and out of the train before it moves to the next station. I quickly think about the odds that there will be people in the train that I know.

This gentle wandering of thoughts is suddenly interrupted by a noise coming from deep inside my body. My stomach is demanding my attention by making a loud growling sound. "Just give me a minute while I finish this sentence before getting some lunch", I tell my stomach.

My keyboard is clicking as I am completing the sentence. While I am listening to music on Youtube, I am backing up my hard drive at the same time. Suddenly I realize the machine in front of me, which is working really hard to perform these tasks, is dead silent...

The origins of audible cues in computing

In the 1940s, computers were giant machines that were manually operated by multiple people. Back then, engineers could hear by the patterns in sounds if the mainframe was operating correctly or if irregularities and errors occurred. (Ploeger 2019) The mechanical sounds produced by clicking relays and vacuum tubes going on and off accompanied by circuit sonification functioned as a valuable source of information to the operators.

In the industrial and engineering field, auditory information was already commonly used to monitor certain processes. Karin Bijsterveld, Professor of Science, Technology & Modern Culture, writes in Listening to Machines that: "....engineers had often considered industrial noise as a sign of inefficiently running machines. What is more, the specific character of the mechanical noises informed them about the inefficiencies’ causes. This practice of listening to machines in order to diagnose the origins of mechanical faults was also evident in car repair." (Bijsterveld 2006) In many other area's, familiar sound patterns were a comfort to engineers, workers and mechanics, as unusual noises suggested material problems. In the case of car repair, irregularities in sound patterns were not only important for the mechanics but also for drivers themselves, as Bijsterveld explains.

Later, in the early 60s, computers still had limited capabilities in displaying information about errors in their operations. Screens as we know them today were not implemented yet. Mainframe computers, which were still considered to be calculators at the time, could only print out error reports on paper afterwards. Flashing lightbulbs were used to represent memory registers. Sound thus provided a valuable layer of sensory information on computational routines.

The IBM Automatic Sequence Controlled Calculator (ASCC), known as The Harvard Mark 1, was a general purpose electromechanical computer that was used during the last part of World War II. (source: Wikipedia)The introduction of transistors from the mid-50s revolutionised computer technology. In addition to many benefits, it also had a rather unexpected disadvantage. Due to the implementation of this new component –relays had already been replaced by radio/vacuum tubes that produced less noise– computers could almost operate in silence. (Alberts 2003)

Engineers however regretted this loss of mechanical sonification despite the predominantly technological improvements. Transistors were more accurate, energy efficient, small and reliable. Hence, engineers started experimenting to bring back sounds by connecting loudspeakers to the circuit. This way, they reintroduced the possibility to monitor the computing process by ear. This led to the integration of sound that was specifically engineered to function as an 'auditory monitor'.From the early 50s, some computers were already provided with a simple hoot which served as an alarm when the machine needed immediate attention. (Copeland & Long, 2017) The function was primarily called when the computer's operations failed, not for aurally monitoring the processes. (Alberts 2003)

Sensory Restoration through sound

Dutch engineer Gerard Alberts was one of the operators at the Philips Physical Laboratory in the Netherlands, doing pioneering work on computers. From the 1950s, Alberts recalls that sound functioned as an important tool for debugging. The loudspeaker sounds provided a sense of comfort as they facilitated a “sensory restoration of the relationship with physical calculation”(Alberts 2000, 45).

Nico de Troye, also a Philips engineer during that time, remembers from working with the IBM mainframe computer that: "The [Harvard] Mark I made a lot of noise. It was soon discovered that every problem that ran through the machine had its own rhythm. Deviations from this rhythm were an indication that something was wrong and maintenance needed to be carried out."(De Troye in Alberts 2000)

Now that computers were able to generate sounds, it was a small step to the first experimentations of composing. Some experiments were even recorded and published.Rekengeluiden van de PASCAL (Calculation sounds from PASCAL) is a 7" record with sounds produced by the PASCAL (Philips Akelig Snelle CALculator) from the Philips NatLab. The recordings consist of computing noises from the computer. Side A contains recordings from regular operation and mechanical noises. On the B-side is a recording of a prime number calculation. The record was included in the Philips Technical Review Vol. 24 (1962) No. 4/5.

Now that computers were able to generate sounds, it was a small step to the first experimentations of composing. Some experiments were even recorded and published.Rekengeluiden van de PASCAL (Calculation sounds from PASCAL) is a 7" record with sounds produced by the PASCAL (Philips Akelig Snelle CALculator) from the Philips NatLab. The recordings consist of computing noises from the computer. Side A contains recordings from regular operation and mechanical noises. On the B-side is a recording of a prime number calculation. The record was included in the Philips Technical Review Vol. 24 (1962) No. 4/5.

A simple square wave generated by the circuit that could vary in tone and length was enough to create compositions. The clock frequency of the computer was used to calculate the frequency of a certain tone. Apart from the fun and playful atmosphere among the engineering team, this was a way to explore the capabilities of the machine. This led to further experimentation in pushing its limits. (Alberts 2003)

Memoirs of an 8-bit Bootlegger

As a kid growing up in the 80s and early 90s, 8-bit gaming consoles such as Nintendo Entertainment System (NES) or Sega Master System 2 were extremely popular. Having saved up to buy a Sega, I quickly realised I was actually not so much interested in the gaming itself. However, what I did find interesting were the weird sounds and pixelated visuals coming from the TV. I spent more time watching my friends play than doing it myself.

From an early age I was already interested in music. I owned a portable radio/cassette player from Aristona, the budget line from Philips which was discontinued in the late 90s. With this device, I used to record songs from the radio and create mix tapes. This sparked the idea to record the music coming from the games. The exotic Japanese influences and distinctive sounds from the early chips is what probably got my attention back then. This music was very different from what was being played on the radio at that time. It sounded much more futuristic and from another world. I recall myself thinking why these songs weren't in the charts.

I encouraged my friends to become better so I would be able to record the music from the final game stages, which I could not reach myself. A win-win situation as a they could play on my computer and I could capture the entire game's music. This bootlegging shaped my love for electronic music creation and publishing practices.

The development of the sound chip

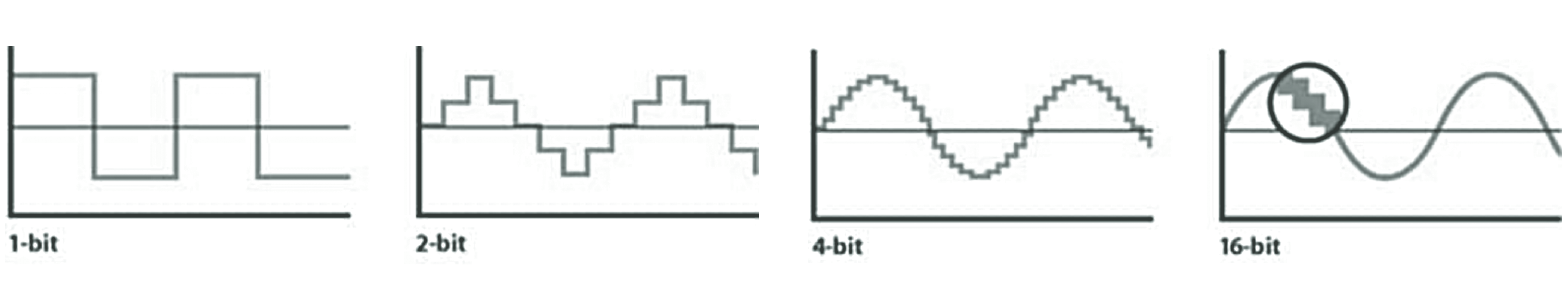

Sound can be produced by repeatedly switching electric current on and off amplified by a speaker. This is called 1-bit sound. Beeps from alarms, detectors, clocks, and melodies from musical postcards are common examples of such circuit sonification still in use today. ('utz' 2018) The basic principles of binary computation seemed perfect for this method of synthesising sound.

The theoretical basis for 1-bit sound in computers was already outlined by British mathematician and computer pioneer Alan Turing in Programmers’ Handbook for Manchester Electronic Computer Mark II in the 1950s. In the documentation, The "Hooter" function described a pragmatic audio routine using the binary 'ON or OFF' values to create simple square waves (⎍⎍⎍⎍⎍⎍) by using the clock speed of the CPU. Not intended for creative purposes, it was invented to create a supportive audible feedback system for computer-human interaction such as tones, clicks, and short pulses produced by a loudspeaker. The manual also suggests the function to be used for operators to be able to “listen in” on a process of a routine. (Trois 2020)

From the 1960s, listening in on operations became obsolete as processing speeds increased. Variations were no longer possible to monitor by hearing. Besides that, displaying capabilities improved as cathode-ray tube monitors were introduced. (Ploeger 2019) The buzzing sounds of square waves developed alongside the urge to make the computer and its interfaces more user-friendly. Simple sound signals were sent to the user when errors occurred or an event demanded attention. The focus was more on developing the visual aspect.

In the early 70s, the first computer games became popular. The release of legendary arcade game Pong "The sounds of first-generation video games like Pong were still generated on the basis of specific electronic circuitry due to limited storage capacities. This meant that the options for integrating musical forms were severely limited. But still, even the characteristic noises of this early phase fulfilled the important function of generating auditive feedback coupled with the visual events on the screen."

"The sounds of first-generation video games like Pong were still generated on the basis of specific electronic circuitry due to limited storage capacities. This meant that the options for integrating musical forms were severely limited. But still, even the characteristic noises of this early phase fulfilled the important function of generating auditive feedback coupled with the visual events on the screen." (Stockburger 2015, 129) showed the potential of sound for an expanded experience. Sound could thus provide a new layer for both understanding and experiencing the game and its interface. This caused a growing demand for more advanced sound effects and theme music. An integrated circuit that could produce more versatile audio signals and music was developed: Programmable Sound Generator (PGM), commonly known as a sound chip.

As these chips were widely implemented, sound designers for games and interfaces used these limitations as a challenge to create the most full and rich sounds possible. Therefore, companies that developed sound chips ended up in a wild 'race': the fastest way to playback high-definition, noise-free, full-range orchestral sounds as the ultimate goal. Over time, various techniques for sound synthesis and sample playback were explored by different manufacturers. This caused a wild ride. The outcome was a large amount of experimentation in –mostly– game music.

The MOS Technology 6581/8580 SID (Sound Interface Device) is the built-in programmable sound generator chip of many models of Commodore computers. It was one of the first sound chips of its kind to be included in a home computer prior to the digital sound revolution. (source: Wikipedia)Operational sounds from personal machines

Due to the 'microcomputer revolution'In 1965, a couple of years before co-founding the company Intel, engineer Gordon Moore predicted an “exponential increase in the number of components” (transistors, resistors, diodes, or capacitors) that could be “crammed” onto integrated circuits every year. (Moore, 1965) This rapid growth had a widespread impact in technological change in all related fields: electronics and therefore computers quickly became smaller and faster over the next years. (This prediction got adjusted to a 1,5 - 2 year forecast and later became known as Moore’s Law.) in the late 70s, computers could be designed small enough to enter the homes and offices. (Jensen 1983) At that time, there were still some operational noises coming from our machines. The clicking sound from the relays going on and off had already changed into a static buzz coming from the motherboard. The sound of loading programs from punchcards had evolved into the mechanical sound of loading software from magnetic tape.

This sound was replaced soon after by the distinctive spinning noises from a new storage device: the floppy disk. Older "machines" often started making heavy blowing sounds over time. The fans, implemented to prevent the computer from overheating, attracted so much dust that the rotating mechanism of the ventilators got louder and louder.

A new archetypical sound made its entry when personal computers were able to access the internet. When networking over phone lines was introduced in offices and homes, the screeching sound of dial-up modemsDial-up modems were developed to send data over the phone lines: a network that was specifically designed to only carry voice. Because of this, the communication method between two modems had to be in the audible hearing range in order to exchange information. Dial-up modems decode audio signals into data to send to a router or computer and encode signals from the latter two devices to send to another modem. The reason the start-up of the dial-in routine was amplified through a loudspeaker was to hear if something went wrong with the connection. (For example, an occupied line, invalid number, a person picking up the phone instead of a modem, etc). was an unmistakeable indication that data was being sent and received. This signal could also be used to listen in on a certain process in order to monitor when a connection was being made. You could basically pick up the phone to hear if someone was using the internet.

The violent sound of networking

In the early 90s, me and my brother visited my uncle for a sleepover. My uncle, working as a mathematician and programmer at the time, wanted to show us something new and exciting: 'the internet'. To demonstrate its capabilities, we were going to 'travel' to the NASA headquarters while just sitting behind the computer. The initial complex dial-up routine failed. The second attempt was more successful. When seeing the NASA logo appear line by line, I knew something memorable was happening. The fact that the information and photos dealt with planets and space exploration made the visit even more captivating, like we actually were in space.

Fast forward to the late 90s, the internet got me hooked. The ability to consume all this information, chat with my friends, download and share music was mind blowing and slightly addictive. To control me and my brother's user time, my dad installed a piece of gatekeeping software to manage the costs. It basically counted down the remaining minutes according to a certain budget we each got per month. Zero minutes left meant you could no longer access the internet.

At the time, not being able to go online was the worst that could happen. Pretty quickly, I found a 'workaround to circumvent' (read: hack) the program. The costs being higher than expected left my parents clueless and frustrated month after month. Besides that, the phone line was occupied very often. The realisation of not being able to make a phone call was loudly accompanied by the sonic terror of the dial-up modem. This confrontation infuriated my mother every single time it happened. Whenever my mother wanted to call my aunt, which I believe happened over 4 times a day, I remember her yelling upstairs how it was possible the phone line was ALWAYS occupied by this "HORRIBLE" sound. (Sorry mum!)

Sound as a valuable source of information

Sound is capable of adding an extra layer to visual information, making it tangible and probable. This is what American philosopher of science and technology Don Ihde calls the 'Auditory Dimension'. The term describes how sound can provide information about material characteristics, movement, location and distance as well as the event that caused it. This extra layer of sonic information can make an appearance richer and more lively. Sound can draw the attention to an object that you might not even be able to see. (Ihde 1974) Silence can be defined as the absence of sound. Even though sound waves are everywhere around us, most of the time they consist of such low intensity that they don't draw attention to themselves directly.

Sound plays an important part in understanding and navigating the world around us. Sounds such as signals and cues can be perceived as warning sign to identify a certain danger. For both animals and humans in nature and in the inhabited world, it can even be a matter of life or death.

The mediating relationship of listener to environment through sound (model by B. Truax. Acoustic Communication, New Jersey: Ablex Publishing, 1984)There is foreground listening and background listening. Our attention is able to shift in between these modes. By changing in volume or timbre, sound can move from background to foreground, as it demands to be listened to more consciously. Sounds such as signals, car horns, alarms, whistles, barks, some types of speech, etc. can immediately change our listening mode as they demand our attention. The sound of a ticking clock, a running car engine or other mechanical sounds can go unnoticed. Until you start to listen attentively, our attention can shift towards it.

User interface sound design

The design of audible cues in Human-Computer Interaction (HCI) is a practice we now know as 'User Interface (UI) sound design'. Ranging from simple beeps to full acoustic compositions, these sounds are used as signals to provide the user feedback in a certain system. They can help to understand, guide and adjust behaviour by drawing attention to messages, functions, hints, warnings, etc. (Blattner et al. 1989)

With the aforementioned rise of more advanced playback capabilities, sound cards in computers were increasingly able to produce more natural sounds. Low-tech sounds such as basic synthetic beeps became less desirable as they sounded outdated whereas high-definition sounds equated to progress.

Startup screen for Windows 95 by Microsoft. From the mid 80s, it became interesting for companies to get sound designers involved in their corporate branding strategies. Sound could help to enlarge the human relationship to both the interface's usability of the machine and –unsurprisingly– to its brand. The pinnacle of this strategy resulted in Brian Eno's iconic orchestra sound that played when starting Windows 95. Just as iconic as the sound itself is the commissioning brief which, according to Eno, consisted of up to 150 adjectives: “The piece of music should be inspirational, sexy, driving, provocative, nostalgic, sentimental … and not more than 3.8 seconds long.” (Eno in Cox 2015, 271–272).

Audible signals complementing the visual aspect of the system were now a priority. With the use of "auditory icons", (Gaver 1986) "audicons", or "earcons" (Blattner et al. 1989) interfaces could distinguish themselves on a sonic level.

The sound's designs often derived from the physical processes which the actions originated from. For instance, the emptying of the trash bin on the desktop containing a list of deleted files would make the sound of paper crumbling. This refers to paper 'file', a word previously used for a collection of programs on punch cards. In systems today, the sound of an analogue camera shutter is being played when making a screenshot. These phenomena are so-called audio skeuomorphs: they virtually mimic the sound of the former material process.

Left: paper punch card / Right: the development of Apple’s UI design for the OS trash-bin icon (1984 - 2014)

In the latest update from Windows 10, the startup sound disappeared. The reason for this choice was stated as follows: "When we modernized the soundscape of Windows, we intentionally quieted the system. … you will only hear sounds for things that matter to you. We removed the startup sound because startup is not an interesting event on a modern device. Picking up and using a device should be about you, not announcing the device’s existence." (Microsoft Corp. in Wong 2015)

Sounds that matter to whom?

It is interesting to take a closer look at what "things that matter to you" actually means here. Because it is followed by the statement that the device should be about the user and "not announcing the device’s existence" makes this is a rather confusing argument.

As silence becomes the new standard in modern computing, our devices always seem ready to use. Even though they appear inactive. In addition, devices nowadays no longer necessarily have to be switched off. A completely inactive device is getting rare as they are charged before running out of power. We forget devices can be turned off and we actually do have a choice in that. While an unforeseen dead battery may cause distress to some, deliberately turning off a device can be considered a radical act of taking control. To some people, voluntarily going 'offline' for a while induces a sense of autonomy and empowerment.

With the absence of (a startup) sound, we miss an important and trusted sensory cue that reminds us about our device's activity, availability and presence. If this activity becomes unquestioned, we lose track of what our devices are actually processing. We forget about the importance of knowing what is happening behind the interface: choosing how a tool can serve us, what it should and should not do. This matters to us.

Announcing its existence thus contributes to a sense of autonomy and control. While one does not need to contemplate the philosophical properties of a tool every time you use it, it's important to get reminded when and how it can be used or –no less important– misused. For instance, in the case of a pen, what it is capable of beyond the material properties of this tool is undeniable. (Wasn't it mightier than the sword?)

When humans use an object or tool, it becomes something different. The more actively and critically one utilizes it, the closer the relationship becomes. The computer makes no sense if we don't use it. But it makes even less sense if we are not aware we are using it. The sound of computer activity can enhance this presence and improve our engagement so the relation with our computing devices does not just simply become 'convenient' or 'useful'.

Recalling the Microsoft quote about not aiming to announce the device's existence almost sounds like denying its power and potential: the machine is executing complex actions for us. Actions a human brain could not perform so quickly. Though "intentionally quieted the system" leaves room for reconsideration. Doesn't this silence prove otherwise? The virtual sounds from sonic skeuomorphs have a dubious role herein as they are essentially disconnected from their underlying technological apparatus. Because they are confusing, it's hard to connect them to the basic principles and function of the computer as a tool. Nevertheless, I believe it's better than nothing as we need to rethink the role of sonification and rediscover its potential.

A fanboy

After using my slick grey laptop for some years, I started to notice a feeling of disconnection over time. The latest updates of the operating system seemed to turn my once-so-beloved machine into a closed-off consumer-safe product. I come to the conclusion that my current machine –the most important tool in my life– does not reflect my current user behaviour any longer. Nor does it meet my changing needs. I always disliked the fact that it was intentionally designed not to be opened up. Back in the days, replacing a sound card was just a matter of buying the part, opening up your tower and installing it. It felt empowering when it worked.

Some weeks earlier I had bought a new Raspberry Pi after I started to gain interest in using 'more personal' computers again. When I opened the package, I was happily surprised to find a small fan included in the kit. Since the Pi doesn't have a power button, I started to use my ears to check whether it was on by just leaning over and putting my ear close to the device. By listening to the fan, I could also tell how fast it was executing a certain task for me. Basically, I just checked its 'breathing'.

One week later, I am working from home. On the other side of the table, my girlfriend is working on her laptop. Over the past months, she has been slowly preparing herself to finally say goodbye to her beloved –yet dying– computer. Executing trivial tasks started to sound as if her laptop was about to lift off. As this occurs again, I see her face changing into full panic mode. Suddenly she yells "OH NOOO!!!! I DON'T THINK IT'S GOING TO MAKE IT!!!!!"

Processing information: always,

everywhere (in silence)

Ubiquitous Computing is a term coined by computer scientists Mark Weiser and John Seely Brown in 1988. Both of them were chief technology officers at Xerox PARC at that time. The term described a future scenario where computational capability is embedded into everyday objects. This technological shift changes our relationship with our devices.Our relationship with computers changed heavily over time. Starting off with Mainframe Computation, this is where only experts and engineers had access to a shared computer. Usually behind closed doors of the research and scientific development companies. This was followed up by the PC era, instigated by the microcomputer revolution. Now, computers became personal by entering our homes and offices. Usually placed in a fixed spot, customisable and expandable to the user's specific needs. Hereafter, Internet and Distributed Computing describes the phase when computers connected to the internet. This led to a massive virtual interconnection of business, personal and governmental purposes. This eventually transformed into Ubiquitous Computing. By equipping 'everything' with inexpensive microprocessors and putting them in networks, they become able to effectively communicate and perform 'useful' tasks in the background. For example (smart)phones, tablets, watches, tv's, refrigerators, cars, printers, thermostats, lights, etc. Today, we call this The Internet of Things.

Due to its inconspicuous introduction, we may not be aware that Ubiquitous Computing is already a part of our daily lives. Whether we need it or not is highly debatable. The reality is, however, that we are already surrounded by computer activity as our devices constantly exchange and process information. While digital computation itself became silent, our attention is continuously grabbed by auditory and visual cues aimed at hooking us to platforms and online services. This is accompanied by a change in appearance: being integrated, becoming smaller, skilfully hidden. This obfuscates their existence.

Credits: Franziska Haaf Digital processes seem immaterial and bodiless. Browsing the internet, storing data in the cloud, sending emails, calling, using messaging apps, etc. evoke the idea of an intangible, weightless environment. When these networked services stop functioning, their physical nature becomes bluntly exposed.

Digital computing often feels like it exists "beyond the material world" as Nathalie Casemajor explains in Digital Materialisms: Frameworks for Digital Media Studies. It is, however, still rooted in simple voltage differences. It consists of switches going on and off, within circuits. Just like the first generation machines that were so large they could easily fill up an entire room. Computation is therefore eminently a material process.

While the theory of Materialism assumes that all things in the world are tied to physical processes and matter, the material state of the digital environment is harder to comprehend because of this seemingly disembodied nature. (Casemajor 2015). The physicality that allows these digital processes is often detached from material characteristics, constraints and notions of decay. For better understanding, efforts"The materiality of digital worlds is now widespread across social sciences and humanities, including in science and technology studies, human–computer interaction, design, sociology, literature, library and information sciences, and organizational communication." (Casemajor, 2015) are being made to bring back the awareness to the material dimension of computing as they directly relate to current geopolitical issues, energy crisis, toxic emissions, e-waste and more. These efforts are addressing issues surrounding the physical impact of data storage, computing devices and network infrastructures. This makes it even more important to rethink how sound and the loss of sonification contribute to this.

Calm Technology

Designing Calm Technology is a proposition from 1995 written by Mark Weiser and John Seely Brown. It opens up a dialogue about what they think of as 'the most important design problem for the twenty-first century'. Being already surrounded by convulsively attention-grabbing technology, they come up with a way to think about this otherwise as they identify a difference in how technology can engage with one's attention. This is what the writers describe as 'the periphery'"We use "periphery" to name what we are attuned to without attending to explicitly. Ordinarily when driving our attention is centered on the road, the radio, our passenger, but not the noise of the engine. But an unusual noise is noticed immediately, showing that we were attuned to the noise in the periphery, and could come quickly to attend to it." (Weiser & Seely Brown 1995) of our attention.

As they explain it, 'Calm Technology' attunes to our attention instead of constantly demanding it. It should work calming to the mind, but clearly does not choose to be silent nor visually absent. They emphasize it is not a division between importance, but an engagement that is able to constantly shift between the center and fringe of our attention.

Two reasons why we should aim for this are presented by the writers. The first reason is that by placing 'things' in the periphery, it's not overloading our senses and distracting us. It should rather be 'informing' when a device is processing information for us. The second reason is that users are offered a choice by being able to 'recenter' cues from computer activity from the periphery. And if we have a choice, we can take control instead of being dominated by it (regardless the difference in how humans process stimuli).

In the case of sound and sonification, silence would suggest there are no processes running. Sound should therefore occupy a more balanced place in our field of hearing. It should announce its existence, but not overstimulate.

On agency and autonomy

When talking about human agency in a digital world, a key question is often raised: what level of basic knowledge about a computer's operation and function is required in order to understand the impact it has on our lives? Especially in relation to the growing use of Artificial Intelligence (AI) and Machine-Learning in chatbots or other text and voice interfaces, the 'man is not a machine' stance has been an important topic of discussion. Joseph Weizenbaum, a computer scientist and early AI researcher, noticed a dangerous trend.

In his book Computer Power and Human Reason: From Judgement to Calculation (1976), Weizenbaum carefully explains how computers do excel at tasks involving quantification, however they lack emotion, the ability to reflect, think and make decisions. Even though this can be simulated through algorithms and scripts, it stands in stark contrast to the enigmatic power of human intuition.

Weizenbaum demonstrated this with the ELIZAELIZA was a fairly simple program which performed natural language processing. Executing a script titled DOCTOR, the screened text-based interface was capable of engaging humans in a conversation. The script bore a striking resemblance to how an empathic psychologist would communicate. However, ELIZA did not understand. It was programmed to make the user believe it understands. program in 1966. Working with natural language and apparently showing interest in the human subject, ELIZA was able to fool even computer scientists at Weizenbaum's lab into believing the computer 'understood' the conversation. With ELIZA, Weizenbaum proved that software has no real understanding of the world and the human subject it interacts with.

Interface for the ELIZA program by Joseph Weizenbaum (1966) Weizenbaum concluded that the computer could not be separated from the social context its operation is situated in. Therefore, he kept reminding others throughout his career about the reason computers exist in the first place: they were designed for the military as a tool of warfare. An important part of his message was making clear that the computer makes use of a set of rules, decisions and physical conditions created by humans, not by the computer itself. Computation does not appear organically in nature.

Being aware of this power and social impact, Weizenbaum was closely monitoring those who were responsible at MIT for making decisions about which computer systems and applications would end up being available to the public. Hence his overarching mission to advocate for general understanding of the basic workings of computers as an attempt for the "demystification''"Once a particular program is unmasked, once its inner workings are explained in language sufficiently plain to induce understanding, its magic crumbles away; it stands revealed as a mere collection of procedures, each quite comprehensible.” (Weizenbaum 1966) of computers as an ethical challenge. Weizenbaum kept on being critical towards his own field: just because a computer can do something does not mean it necessarily should. What are the limits of what computers should do?Weizenbaum feared that to "some computer scientists, the types of problems that computers excelled at solving were cast as nearly synonymous with the types of problems humans tried to solve." (Joseph Ratliff, 2016) Later concretising it as, “there are some human functions for which computers ought not to be substituted. It has nothing to do with what computers can or cannot be made to do. Respect, understanding, and love are not technical problems.” (Weizenbaum, 1976) Stating that “the validity of a technique is a question that involves the technique and its subject matter.” (Weizenbaum 1976, 35)

Digital tools seem to have great power in connectivity and executing tasks the human brain cannot perform. Yet, they appear clueless when errors occur. This can even be caused by a tiny binary inaccuracy, a faulty line of code or a dead battery. When they are not operational, this breaks the perception of their regularity. Separated from its function, the user is confronted with its sole material being, representing a potential function but not able to execute it (which leads towards Heideggerian questions about the properties, meaning, presence and existence of an object). This can overwhelm us and lead towards feeling alienated.

Accessing the internet by means of brute force

When I moved into a new place, a lot of work needed to be done. Because it is an old house, the use of space was rather inconvenient. It also had outdated amenities such as hinges and locks. I remember finding a keychain laying around in a kitchen drawer. It held 28 different keys. This was pretty remarkable since I only counted 7 keyholes in the entire house. Thinking about its origins, how it ended up here, what use they could have had for previous owners and the owners before them left me clueless. I would just save them for now, just in case. You never know...

In the final stages of doing the odd jobs, I had installed the router in the electricity closet where the network cables entered the house. Then, an 'interesting' event occurred.

When I wanted to setup the wireless network, the router did not seem to work. What ought to be a quick fix ended up as a rather violent confrontation: the door to the space where the router was installed had locked by itself. Although the lock did not seem to be functional before, I came to the conclusion it was shut tightly. After I spent a long time figuring out which one of the 28 keys was the right one –of course– none of them would fit. Inconveniently, this particular door not only gave access to the router, but also to the water mains, the electric fuse box and some tools I already placed there. It was a doorway to all kinds of basic human needs. Accessing the internet was my top priority at that moment.

Eventually, me and my dad were left with no other option than to force our way in. The act of breaking the door with a crowbar caused a violent cracking sound. What seemed to be a fragile lock, eventually led to replacing the entire door. Due to this new lock, a 29th key was introduced to the keychain. I remember this as the hardest effort ever to go online.

Interfaces, affordances and doorknobs

In order to understand the significance of sound and what problems occur now that it is disappearing, it's important to take a look how we interact with our devices. One could argue what the active role for the user is in all this or even what basic knowledge should be educated. Despite this, arguably, a lot of power lies with those who are designing interfaces of software and systems. They decide how we use and experience the interaction with our devices and what sounds we hear or do not hear.

The interface establishes interaction between a human and computer. In Software Studies, A Lexicon, Matthew Fuller and Florian Cramer define the interface as that which connects “software and hardware to each other and to their human users or other sources of data.” (Fuller & Cramer 2006) As a practice, it is designing the gateway devoted to decision-making, as Olia Lialina explains in her 2019 article Once Again, The Doorknob: Affordance, Forgiveness, and Ambiguity in Human-Computer Interaction (HCI) and Human-Robot Interaction. She emphasizes the importance of the interface:

"To say that the design of user interfaces powerfully influences our daily lives is both a commonplace observation and a strong understatement. User interfaces influence users’ understanding of a multitude of processes, and help shape their relations with companies that provide digital services. From this perspective, interfaces define the roles computer users get to play in computer culture." (Lialina 2019)

Lialina discusses the text Why Interfaces Don’t Work, published in 1990 by Don Norman. Norman, working as a designer for Apple besides being a theorist, advocated for "transparent" interfaces which later became adopted in interface terminology as “invisible” and “simple”. Driven by the huge influence the company had at the time, it turned into a dominating conception that the interface should be unnoticed. (Lialina 2019) This assumption was later pushed by Jef Raskin in The Humane Interface (2000). Raskin, who was initiator of the Macintosh project and researcher on this topic, writes that: “Users do not care what is inside the box, as long as the box does what they need done. […] What users want is convenience and results”. The responsibility developers have and how far they should hide computational processes from the user is unquestioned.

When discussing the affordances of an interface, the metaphorical expression of 'the doorknob' is frequently used. Repeatedly quoted over and over until it became a standard to explain the imagery, Norman wrote: “A door has an interface – the doorknob and other hardware – but we should not have to think of ourselves as using the interface to the door: we simply think about ourselves as going through the doorway, or closing or opening the door.” (Norman 1990, 218) To Norman, the doorknob should thus not be an obstruction for the user.

Contribution for ‘world’s worst volume control Interface design challenge’ on Reddit. (creator unknown)

There is a lot to argue against this description. It can't be ignored that a doorknob is in fact a highly complex object. Its function varies depending on where it's situated. A door is an entrance to another space. The doorknob offers this possibility.

For this reason, it raises questions regarding power structures. Who can, or is allowed to open it? What direction does it open to? In theory, even its material characteristics and placement can provide information on this matter. Besides that, "transparency" and "unnoticed" is highly open for interpretation.

If we think about the role of sound in restoring embodiment and agency in computation, a hypothetical question arises. What sound should the doorknob's mechanism make? The spectrum of choices provides endless possibilities: from the literal sound that mimics the doorknob's mechanics, all the way to the CPU synthesizing a 1-bit cue produced by the circuitry of a machine. Or one of the countless imaginative metaphorical sounds in between?

The Silent Threat

After a morning of procrastinating, I finally succeeded in leaving the house. I needed to go to the grocery store to buy some lunch. Since the store is quite close, I wondered why it took me so much effort today. Getting out is always good for my concentration on the long term, and eating increases my energy levels even more.

As I was approaching an intersection, I got distracted by a couple of unusual bird sounds coming from the air. They almost sounded like they were artificially synthesised. As I crossed the street, an unexpected quick movement caught my eye, scaring the life out of me. I froze.

In a split second, I realised a car had approached me to the point of almost hitting me. It happened so fast, I hadn't noticed it. As I was left puzzled, processing what just happened and why I had not spotted it, I suddenly realised it was an electric car. Since the motor itself had almost been silent, the uncanny sound from the tyres moving over the road alone had not caught my attention. Still shocked by what had been a dissonance between ears and eyes, I felt angry. This event that happened between me and the 'Assassin on Wheels', as I jokingly called it, had a big impact on how I felt over the next days. I kept thinking about the role of sound in relation to safety and even ethics, wondering why there is no discussion about this. Now that we can produce silent cars, does that mean we should?

Dealing with silence: parallel challenges in

sound design

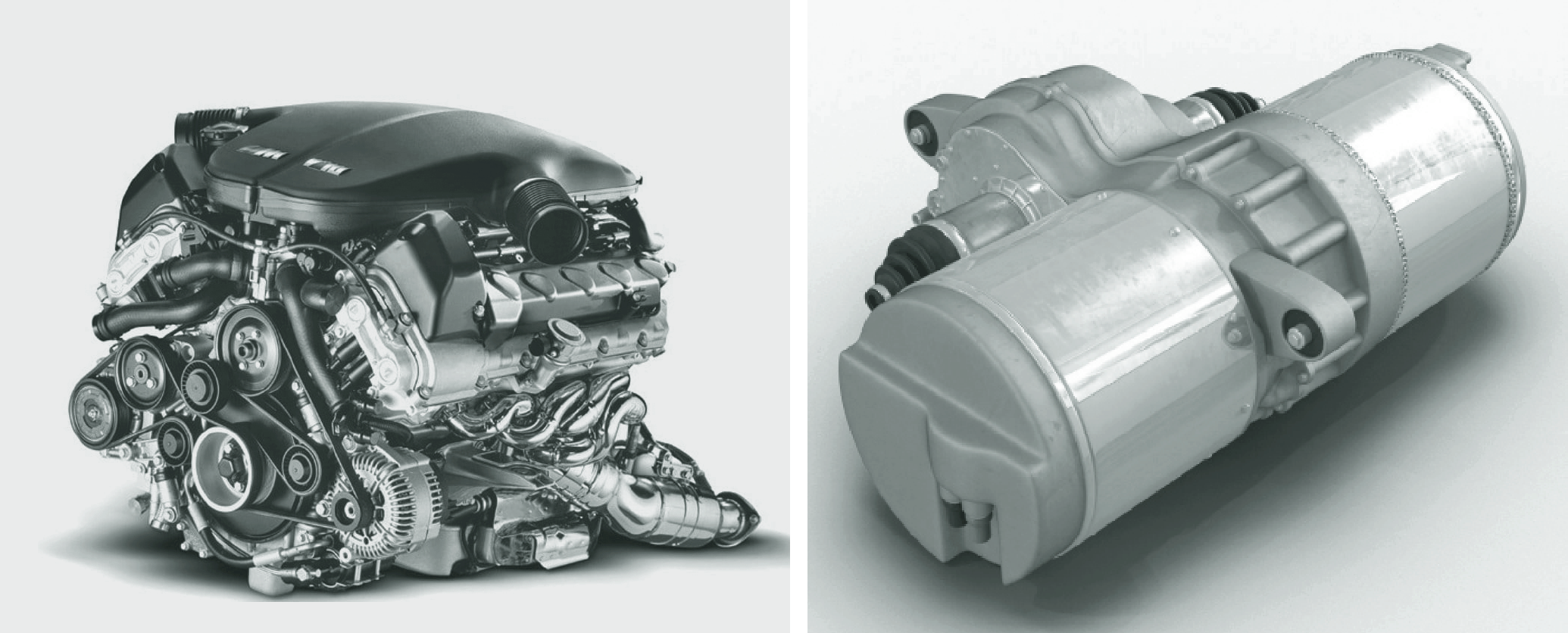

Today, dealing with silence is a subject for discussion in many fields. Technological developments cause original sounds to disappear, creating a need for virtual replacements. Such struggles can be found in the sound design for electric cars and motorcycles. In this field, these kinds of challenges fall under the field of ”active sound design”The practice of Active Sound Design is deployed to ensure that attractive sound signatures match customer expectations. On one hand it masks the unpleasant sounds. And on the other hand, it creates acoustic feedback by adding the missing engine orders. On top of that, Active Sound Design generates exterior sound to also serve the original equipment manufacturers’ legal requirement to develop acoustic vehicle alerting systems. (Sambaer 2020)

. Electric motors are increasingly beginning to replace the motorized mechanics of the combustion engine. Because electric motors can operate almost in silence, here, sound becomes obsolete. This is causing safety issues (you don't hear them approaching).

Left: combustion engine (BMW) / Right: recharchable electric motor (Tesla) Because of this silence, it would also 'lack emotion'. This leads some designers to completely replace former engine sounds with Audio Skeuomorphisms. Some are exploring alternative replacements such as –again– orchestral soundsFor BMW’s electric car model ‘iX’, renowned German film score composer Hans Zimmer designed “a completely new symphony” instead of replacing the traditional engine sounds.

. Some stick with silence. Other companies typically dealt with it in their own fashion.Harley Davidson, a motorcycle brand notorious for the sound their iconic engines produce, already amplified the sound of the motor in an early design. “The sound is the most important, and we didn’t want to lose that. We didn’t want a silent product”, Jeff Richlen, chief engineer for the new electrical prototype bike, said in 2014. Later, the company revealed how sound would be implemented: by using a compact “engine sound system” consisting of two speakers located on the tail of the bike to produce the artificial engine sound, corresponding to the rider accelerating.

In the field of UI sound design itself, interesting developments are happening. In the paper Sound design for Affective Interaction (2007) by Anna DeWitt and Roberto Bresin, research is done how sound functions in complementary ways to certain visualisations. The main goal is to create interaction with embodiment as central aspect. The researchers describe their attempts to "narrow the gap between the embodied experience of the world that we experience in reality and the virtual experience that we have when we interact with machines." (DeWitt & Bresin 2007). For example, when a message is received, a cue that mimics the sound of marbles falling into a container is played. Here, the container is used as a metaphor for incoming data on a handheld computational device. The reasoning behind this choice is that the marbles' "sound model for impact sounds can be configured to infer the idea of material, weight and impact force."

A more straightforward example is the mechanical keyboards that are an exponentially growing hype in the last decade. Modern keyboards become flatter, quieter and increasingly turn into smooth touch screens. Apparently, our senses seem to miss the additional sensory input of the material. Besides touch, sound also used to accompany the strokes on the keyboard. On smartphones, this haptic feedback was replaced by a vibrating cue which also provided a trembling sound. Because of this absence, emulation of mechanical keyboard sounds have been developed for notebooks. (But also for "annoying the hell out of my coworkers", as the developer states.)

Lastly, most digital cameras emit a shutter-click sound when taking a picture. This audio skeuomorph, simulating a mechanical analogue camera, has been replicated into digital cameras for providing feedback to users. Remarkably, countries such as South Korea, India and (likely soon) the US legislated new camera phones to include a loud clicking sound when someone takes a picture. The purpose for sound is to alert people that a picture is being taken and to avoid violation of privacy and predatory behaviour. (Parikh 2019)

Due to rapid technological developments, the sounds and sonification of computer activity are about to disappear. Audible cues from interfaces seem to follow this trend. But just because we have the technical capability to silence everything, does that imply we also should? And is it too late to ask?

To a lot of new users, the sound of the fan from our aging laptops might be one of the last sonic traces that connect us to the material world of computation. Once this mechanical 'flaw' can be silenced, will there only be a few confusing sonic skeuomorphs left?

We seem to further drift away from the material nature of the digital processes that happen all around us. Sonic information is essential for restoring the sensory relationship of the basic principles of computation and networking. As we become increasingly dependent on this, sound can contribute to regaining autonomy through cognition and perception. Especially now we are able to make our computational devices smaller, faster and connect them through invisible networks, it keeps getting harder to grasp what's happening behind the interface.

The coming of age of rejecting silence?

We can't seem to let it go. In movies and animations, a notable pattern keeps repeating itself. When computer activity is being depicted, it is almost always supported by sound. Streams of zeros and ones are usually accompanied by familiar high pitched bleeps, generated by complex computer code. Repeatedly reused, it became an archetypical audio-visual representation of computation. Although the execution of code and the binary process itself nowadays don't make any sound, there seems to be an ongoing urge to explain this process through sonification. It draws the attention to the computer activity, emphasising it almost on a hyperrealistic level. Besides having evolved as a cinematic standard, there is more behind it. Does it expose the human desire to be in control in the Man-Machine relationship? What's this human sensitivity to these beeps?

Desciption on Youtube: “If you need futuristic sci-fi computer beeps, bleeps, and buttons sound effects, scanning, readouts, and data processing sound effects, or if you need variety of sci-fi computer typing sound effects in order to give the project that you’re working on that sophisticated, futuristic sci-fi sound, Binary Code soundpack has you covered!” The sonification of invisible processes keeps occurring in various fields and practices. There seems to be an ongoing need to bring highly complex 'inhuman' activities to the human sensorial world. Intangible activity such as data streams, radiation, theories of physics and math, etc. have repeatedly been subjects to sonification. Why? Because there is something reassuring to this. Monitoring sonic information from our surroundings gives the impression that we oversee invisible activities in order to estimate their potential impact (safety, damage). It is in human nature to identify danger in sounds that deviate from familiar patterns. This is certainly the case with computers and their seemingly complex operations running increasingly 'beyond our sight and understanding'. Sounds and sonification can at least give us the impression we are in control. But there is more to these sounds.

Drifting away from the sound of a CPU

In the pursuit of 'high definition' playback capabilities, an interesting side effect happened. Namely, that this evolution led to a step-by-step disconnection from the material origins from the source of computation itself. Starting from the switching current from circuit sonification, evolving into 1-bit sounds from the CPU, followed up by 4 to 16-bit FM sound chips in which various synthesis and wavetable playback techniques were explored, we rather quickly achieved full-range recording and playback capabilities. This resulted in the ability to play back any imagined sound: free from background noise and without signs of material limitation.Nowadays when we label sound or music as 'lo-fi' (low-fidelity) or 'low-tech', we generally allude to audible 'imperfections'. Characteristics such as background hiss, flutter from magnetic tape recordings, distortion through amplification, etc. indicate a remark regarding quality. But in fact, we actually refer to audible traces of material 'limitations', albeit due to limited technical capabilities from recording gear that was used. Interestingly, the term 'lo-fi' and 'low-tech' are commonly used for describing low-resolution sample playback capabilities by obsolete chips and sound cards. For example, grainy PCM-samples, loss of quality due to recording settings, and even low bitrate caused by digital compression or conversion. Whether the term is used correctly is up for debate.

If we think about how this 'race' eventually ended up in today's predilection for silence, in retrospect, this hunger for progress seems logical. Rapid technological developments in computer technology conditioned the consumer to be hungry for the next big thing. So why go back to something that sounds outdated? Therefore, the question if sound should be eliminated altogether has never been a topic for discussion. We just went with it.

Even though low-tech sounds may seem clumsy and outdated to some, its distinctive characteristics are also widely praisedThe chip music scene is a worldwide scene dedicated to making synthesised electronic music made using obsolete programmable sound generator (PSG) chips or synthesizers in vintage arcade machines, computers and video game consoles. (Source: Wikipedia) The use of sound chips as instruments can be deployed as an artistic choice to obtain certain aesthetics of nostalgia. Its use can also be disassociated from the context in which these sounds originated, as these means and methodologies were typically discarded when the next big thing came around: “arguably, before their potential could be fully explored.” (Long in Low Level Manifesto 2016). Above all, there seems to be a natural attraction in the human hearing that is sensitive to these basic characteristics: these sounds somehow connect us to the material world of its source.

Audio resolution and bit-dept in recording explained: the higher the bit-dept, the more naturally accurate the audio signal being recorded is represented.Low-tech sounds make the inner workings of the technology needed to produce them more present to the ear. (Even when they are emulated) The higher the technical limitations, the more this 'true nature' is revealed. The synthesised sounds of beeping square waves are imprinted in our collective memories. In my opinion, they transcend nostalgia because we have a deep human connection to them.

The potential of reintroducing sonification

It's hard to imagine companies equipping computers with artificial operational noises today. With the options to replace the mechanical sound of the combustion engine in the back of our minds, it's interesting how this applies to speculative sound design for modern computing and networking. It's important to look back at the original meaning and function of sounds, as they connected us to the material world of computation. Here, I am not advocating to provide modern computers with operational ambient soundscapes from computer fan sounds played softly over the speakers. Or make thermostats play dial-up modem sounds whenever we adjust the temperature with our phones. Although it sounds funny, I believe complete silence isn't a step forward either. But then what?

If we revalue the origins of audible cues when designing sounds for computer activity today, what role can the CPU and circuitry itself play in this? On the one hand, the CPU is the core of our computing devices. It creates electric pulses going on or off which is the basic principle of binary computation. On the other hand, there is our sensitivity to sonification. If we use this appropriately and in the interest of the user, there is potential in this.

For example, low and comforting static noise patterns generated by the CPU can be used to reveal certain activity. Or tiny audible occurrences such as electrical pulses produced by the circuit can be used to announce a device's existence. We can responsibly use the changing function of sound in different modes of listening: demanding our attention or existing in the periphery. How could this be translated into changes in volume and direction of the sound's image through amplification? How can all these factors emphasize the material characteristics of our computational devices instead of obfuscating their nature and origins? The solution could free us from skeuomorphs and reconnect us to the sole being of the machine.

A deep sigh from within

referring to this thesis of course), a problem arises. Just before correcting one of the last crooked sentences, the document I am working in freezes. As I first blame the browser, I conclude my machine is jammed. Although this happens rarely –my machine served me well over the years– my first reaction is always the same: I desperately start pressing the keys on my keyboard and increasingly faster and harder although I don't remember it ever solving the problem. The sounds produced by the mechanics of the keys do not match my expectation as my screen gives me the information that it is not accepting any input. As I nervously click my mouse and double check the connectivity of the cable, nothing seems to change.

My machine served me well over time. This rarely happened. It scares me when I think about the chance of losing any of the work I have been doing... I decide to go the hard way and manually reboot my machine by forcefully holding down the power button for a few long lasting seconds. An almost inaudible electronic clicking sound that comes from deep inside my computer is followed by some kind of sigh. This tells me the motherboard stopped receiving electrical power. After leaving my machine untouched for 5 seconds by awkwardly laying my opened hands next to it, I gather the courage to hit the power button again. While I hear the current running through the electrical components somewhere deep inside, I think about the possibility that this no longer will be audible in my next computer. Then a familiar, uncanny noise interrupts this thought. I never thought I would be so relieved to hear a start-up sound.

Epilogue

This work has been produced in the context of the graduation research of Mark van den Heuvel from the Experimental Publishing (XPUB) Master course at the Piet Zwart Institute, Willem de Kooning Academy, Rotterdam University of Applied Sciences.

XPUB is a two year Master of Arts in Fine Art and Design that focuses on the intents, means and consequences of making things public and creating publics in the age of post-digital networks. https://xpub.nl

Thanks to

Many thanks go to Marloes de Valk (adviser), Aymeric Mansoux (second reader), and Tom van den Heuvel (text corrections).

---------._,-'"`-._,-'"`-._,-`-._,-'"`-._,-'"`-._ ..............._._._._._._._ ._._._._._...._”`-._,-’”`-._,-`-._,-’”`-._,-’”`-._

---------._,-’”`-._,-’”`-._,-`-._,-’” `-._,-’”`-._ ._ ._._._._...._”`-._,-’”`-._,-`-._,-’”`-._,-’”`-._ _,`-._,-’”`-._,-’”`-._..............._....._”`------- ---._,-' "`-._,-'"`-._,-`-._,-'"`-._,-'"`-._ ---------._,-'"`-._,-'"`-._,-`-._,-'"`-._,-'"`-._---------._,-'"`-._,-'"`-._,-`-._,-'"`-._,-'"`-._--------- ._,-'"`-._,-'`-._---------._,-'"`-._,-'"`-._,-`-._,-'"`-._,-'"`-._--------- References & Bibiography

Alberts G. (2000) Rekengeluiden. De lichamelijkheid van het rekenen, Informatie & Informatiebeleid 18-1 (2000); pp. 42-47

Alberts G. (2000) Com putergeluiden, Informatica & Samenleving, (ed. G. Alberts and R. van Dael), 7–9; 2000, Nijmegen, Katholieke Universiteit Nijmegen

Alberts G. (2003) Het geluid van rekentuig, Een halve eeuw computers in Nederland 2: Nieuwe Wiskrant, 2003, 22, 17–23.

Bijsterveld, K. (2006) Listening to Machines: Industrial Noise, Hearing Loss and the Cultural Meaning of Sound. Interdisciplinary Science Reviews 31.

Blattner, M. Sumikawa, D. and Greenberg, R. (1989) Earcons and Icons: Their Structure and Common Design Principles, Human-Computer Interaction.

Bollmer G. (2020). Technological Materiality and Assumptions About ‘Active’ Human Agency. [ONLINE] Available at: http://digicults.org/files/2016/11/III.1-Bollmer_2015_Technological-materiality.pdf. [Accessed 11 November 2020].

Braguinski, N. and ‘utz’ (2018). What is 1-bit music? Ludomusicology. [online] Available at: https://www.ludomusicology.org/2018/12/09/what-is-1-bit-music/ [Accessed: 21 March 2020]

Casemajor, N. (2015) Digital Materialisms: Frameworks for Digital Media Studies. Westminster Papers in Culture and Communication, 10(1), pp.4-17.

Copeland, J & Long, J., 2021. Alan Turing: How His Universal Machine Became a Musical Instrument. [online] IEEE Spectrum: Technology, Engineering, and Science News. Available at:

Cox, T. J. 2015. The Sound Book: The Science of the Sonic Wonders of the World. New York: W. W. Norton.

DeWitt, A. & Bresin, R. (2007) Sound Design for Affective Interaction. KTH, CSC School of Computer Science and Communication, Dept. of Speech Music and Hearing, Stockholm, Sweden 523-533. 10.1007/978-3-540-74889-2_46.

Fuller, M. (Ed.). (2008). Software studies: A lexicon. Mit Press.

Gaver, W.W. (1987). Auditory Icons: Using Sound in Computer Interfaces. Hum. Comput. Interact., 2, 167-177.

Ihde, D. (1974) The Auditory Dimension. In Listening and Voice: A Phenomenology of Sound, Athens: Ohio University Press. Pp. 49–55

Jensen, R. (1983) The Microcomputer Revolution for Historians, The Journal of Interdisciplinary History, vol. 14, no. 1, 1983, pp. 91–111.

Kanitsakis, S. (2021) An audio comparison between the sounds of Atari’s “Pong” and the silence of Magnavox Odyssey’s…. [online] Medium. Available at: https://stelioskanitsakis.medium.com/an-audio-comparison-between-the-sounds-of-ataris-pong-and-the-silence-of-magnavox-odyssey-s-83e6fac56653 [Accessed 4 March 2021].

Kirschenbaum, M. (2008). Mechanisms: New Media and the Forensic Imagination. Cambridge, MA: MIT University Press. PMCid: PMC2576424.

Long. D. (2016) LWLVL Manifesto [ONLINE] Available at: http://www.lwlvl.com/manifesto. [Accessed 16 November 2020].

Lialina, O. (2019) Once Again, The Doorknob. [online] Available at: http://mediatheoryjournal.org/olia-lialina-once-again-the-doorknob/ [Accessed 23 March 2021].

Moore, (1965) Cramming More Components onto Integrated Circuits, Electronics, vol. 38, Apr. 1965, p. 1.

Norman, D. (1990) Why Interfaces Don’t Work, Laurel, B. (Ed.) The Art of Human-Computer Interface Design. Reading, Mass.: Addison Wesley Publishing Corporation.

Norman, D. (2008) Affordances and Design [online] Available at: https://jnd.org/affordances_and_design/ [Accessed 2 January 2020].

Parikh, P. (2019) Here’s why the camera shutter sound can’t be turned off in japan. [online] Available at: https://eoto.tech/camera-shutter-sound/ [Accessed: 1 May 2021.

Parikka, J. (2015) A Geology of Media, Minneapolis/London: University of Minnesota Press.

Ploeger, D. (2020) Imagining the Seamless Cyborg: Computer System Sounds as Embodying Technologies, The Oxford Handbook of Sound and Imagination, Volume 2, 579 - 593.

Raskin, J. (2000) The Humane Interface. New Directions for Designing Interactive Systems. Reading, Mass: Pearson Education.

Radvansky, G. (2005). Human Memory. Boston: Allyn and Bacon. pp. 65–75. ISBN 978-0-205-45760-1.

Sambaer, M. (2020) All you need to know about vehicle active sound design [online] Available at: https://blogs.sw.siemens.com/simcenter/the-sound-of-future-active-sound-design/ [Accessed 22 March 2021].

Smith, J.O. (2010 Physical Audio Signal Processing [online] Available at: http://ccrma.stanford.edu/~jos/pasp/ [accessed January 29th, 2021].

Stockburger, A. (2015) Games, See this Sound: Audiovisuology, A Reader. Köln: Verlag der Buchhandung Walther König.

Strous RD, Cowan N, Ritter W, Javitt DC. (1995) Auditory sensory (“echoic”) memory dysfunction in schizophrenia”, Am J Psychiatry. 152 (10): 1517–9. doi:10.1176/ajp.152.10.1517. PMID 7573594.

Timeline of Computer History (2021) Computer History Museum. [online] Available at: https://www.computerhistory.org/timeline/ [Accessed 14 April 2021].

Trois, B., (2020) The 1-Bit Instrument. Journal of Sound and Music in Games, [Online]. Volume 1, number 1, 44 - 74. [online] Available at: http://online.ucpress.edu/jsmg/article-pdf/1/1/44/378624/jsmg_1_1_44.pdf [Accessed 16 November 2020].

Weiser, M. and Seely Brown, J. (1995) Designing Calm Technology. [online] Available at:https://people.csail.mit.edu/rudolph/Teaching/weiser.pdf [Accessed 16 November 2020].

Weizenbaum, J (1966) ELIZA: A Computer Program For the Study of Natural Language Communication Between Man And Machine, MIT Press.

Weizenbaum, J. (1976). Computer power and human reason: From judgment to calculation, City: W. H. Freeman & Co.

Wikipedia.org. (2013) Echoic memory. [online] Available at: https://en.wikipedia.org/wiki/Echoic_memory [Accessed 1 February 2021].

Wong, Raymond. 2015. The Evolution of Windows Startup Sounds, from Windows 3.1 to

10.” Mashable. https://mashable.com/archive/windows-evolution-startup-sounds [Accessed May 20, 2022].

Credits

The layout for this thesis is based on Tufte CSS by Edward Tufte.